Skate to Where the Puck Is Going

The model was never the business. As intelligence turns into a commodity, every lab is racing up the stack to where the margin lives — and OpenAI just filed for its IPO from the back of the line.

Future-Proof

Trending

Claude 5 Fable Vibe Check: Anthropic Opens the Door to a Mythos-Class Model

On June 9, 2026, Anthropic did something it had spent two months publicly hesitating to do: it put...

Intelligence Demand Is Infinite

Last quarter our AI bill barely moved. Token usage went up and to the right, the kind of...

Dr. Zuck in the Metaverse of madness

The cash kings of the last decade are suddenly passing the hat. Whether that's a war chest or a ransom note depends on which one you're holding.

This Time It’s Different

AI valuations are headed for a 50 to 70 percent correction. Scott Galloway called it. Here's why the bubble pops and the technology wins in the same crash.

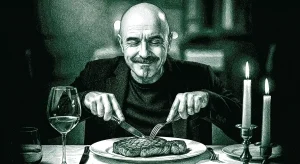

Ignorance Is Bliss

The state moved on AI this week — a toothless order on top, a populist revolt underneath. Everyone wants the steak. Nobody wants to watch the cow get butchered.

The Meter’s Running

Subsidized intelligence is over. The meter that finally priced the machine is turning toward the seat next to it.