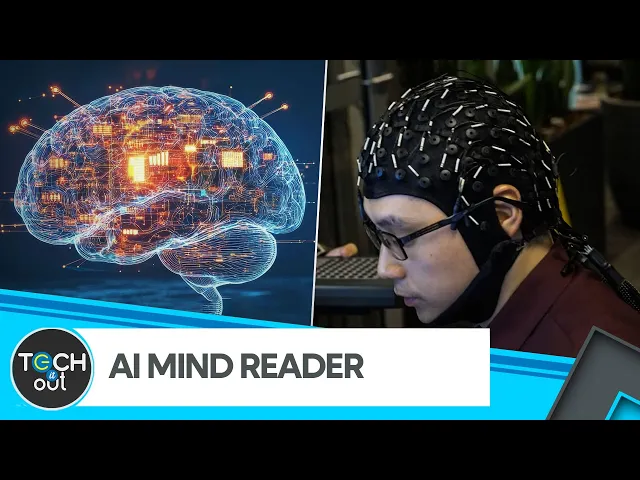

AI Model Transcribes Human Thoughts To Text | Artificial Intelligence

Brainwave-to-text AI turns thoughts into words

In a remarkable fusion of neuroscience and artificial intelligence, researchers have achieved a breakthrough that once seemed possible only in science fiction: the ability to convert human thoughts directly into text. A team at the University of Texas at Austin has developed an AI system capable of decoding brainwaves and transforming them into coherent written words, opening up transformative possibilities for how we might communicate in the future.

The breakthrough explained

The research team's AI model demonstrates a fascinating capability to interpret neural activity recorded through non-invasive methods like electroencephalography (EEG) and functional magnetic resonance imaging (fMRI). Their system can decode brainwaves into meaningful text with surprising accuracy, though the technology remains in its early stages.

-

The AI uses neural decoding to analyze brain activity patterns associated with specific thoughts or words, then translates these patterns into readable text without requiring invasive brain implants.

-

Researchers trained the model by having participants view or listen to sentences while their brain activity was recorded, creating a dataset of neural patterns linked to specific linguistic content.

-

The current system achieves about 40% accuracy in transcribing thoughts to text – still far from perfect, but representing a significant advance from previous attempts and proving the concept is viable.

-

Ethical considerations around privacy, consent, and potential misuse are being actively discussed alongside the technical development, with researchers advocating for careful governance of this powerful technology.

Why this matters: The transformative potential

The most compelling aspect of this research is how it could revolutionize accessibility for people with communication disabilities. For individuals with conditions like ALS, locked-in syndrome, or those who have suffered strokes that affect speech, a direct brain-to-text interface could restore their ability to communicate with the world without physical movement or speech.

This represents more than just a technical achievement—it's a potential lifeline for millions globally who struggle with communication barriers. While current assistive technologies often require some physical capability (eye movement, single-finger control), a mature version of this technology could work directly from thought, opening communication channels for those with even the most severe physical limitations.

The implications extend beyond healthcare into how all humans might interact with technology in the future. As AI development accelerates and computing becomes more ubiquitous, thought-based interfaces could eventually

Recent Videos

Hermes Agent Master Class

https://www.youtube.com/watch?v=R3YOGfTBcQg Welcome to the Hermes Agent Master Class — an 11-episode series taking you from zero to fully leveraging every feature of Nous Research's open-source agent. In this first episode, we install Hermes from scratch on a brand new machine with no prior skills or memory, walk through full configuration with OpenRouter, tour the most important CLI and slash commands, and run our first real task: a competitor research report on a custom children's book AI business idea. Every future episode will build on this fresh install so you can see the compounding value of the agent in real time....

Apr 29, 2026Andrej Karpathy – Outsource your thinking, but you can’t outsource your understanding

https://www.youtube.com/watch?v=96jN2OCOfLs Here's what Andrej Karpathy just figured out that everyone else is still dancing around: we're not in an era of "better models." We're in a different era of computing altogether. And the difference between understanding that and not understanding it is the difference between being a vibe coder and being an agentic engineer. Last October, Karpathy had a realization. AI didn't stop being ChatGPT-adjacent. It fundamentally shifted. Agentic coherent workflows started to actually work. And he's spent the last three months living in side projects, VB coding, exploring what's actually possible. What he found is a framework that explains...

Mar 30, 2026Andrej Karpathy on the Decade of Agents, the Limits of RL, and Why Education Is His Next Mission

A summary of key takeaways from Andrej Karpathy's conversation with Dwarkesh Patel In a wide-ranging conversation with Dwarkesh Patel, Andrej Karpathy — former head of AI at Tesla, founding member of OpenAI, and creator of some of the most popular AI educational content on the internet — shared his views on where AI is headed, what's still broken, and why he's now pouring his energy into education. Here are the key takeaways. "It's the Decade of Agents, Not the Year of Agents" Karpathy's now-famous quote is a direct pushback on industry hype. Early agents like Claude Code and Codex are...