Claw-code Broke GitHub’s Star Record in 24 Hours. Two Engineers Did It on an Airplane. Here’s What That Means for Your Business.

Here’s the number: 100,000.

That’s how many GitHub stars a repository called claw-code collected in roughly 24 hours. Not a year. Not a month. One day. By the time a live stream was done discussing it, the counter was climbing by a thousand stars every ten minutes. Nobody in the room could remember seeing anything grow that fast. Because nothing had.

I watched it happen in real time. I’d met the two engineers behind it the weekend before at an AI hackathon in San Francisco. Within 72 hours of shaking hands, they’d built the fastest-growing repo in GitHub history — and neither of them had been at a keyboard.

One was on a plane flying home to Seoul. The other was somewhere over the Pacific heading to Vancouver. They weren’t opening terminals. They were texting their AI agents.

Let that sit for a second.

The Backstory: What Actually Happened

Before we get to the engineering, the origin story matters — because it’s the whole point.

On March 31, 2026, a security researcher named Chaofan Shou noticed something odd in the npm registry. A new version of Anthropic’s Claude Code had shipped with a 59.8 MB JavaScript source map accidentally attached — a debug file that could reconstruct 512,000 lines of proprietary TypeScript source code. It wasn’t a hack. It was a build tooling error. The file was just there.

Within hours, the developer community was in a frenzy. Anthropic started issuing DMCA takedowns. GitHub mirrors started going dark.

At 4 AM, Sigrid Jin — a Korean engineer who had burned through 25 billion Claude Code tokens in the past year and been profiled by the Wall Street Journal for it — woke up to his phone exploding. His girlfriend in Seoul was worried he’d face legal action just for having the code on his machine.

So he did what any engineer would do. He sat down and rewrote the whole thing from scratch.

Not copied. Rewrote. A clean-room Python rewrite capturing the architectural patterns of Claude Code’s agent harness without reproducing any proprietary source. The whole thing orchestrated end-to-end using a tool called OhMyCodex. Before sunrise, he pushed the repo. It hit 50,000 stars in two hours. 100,000 in 24. The claw-code repository is now the fastest repo in GitHub history to surpass 100K stars.

That’s the news story everyone covered. Here’s the part they missed.

Who Built This and How

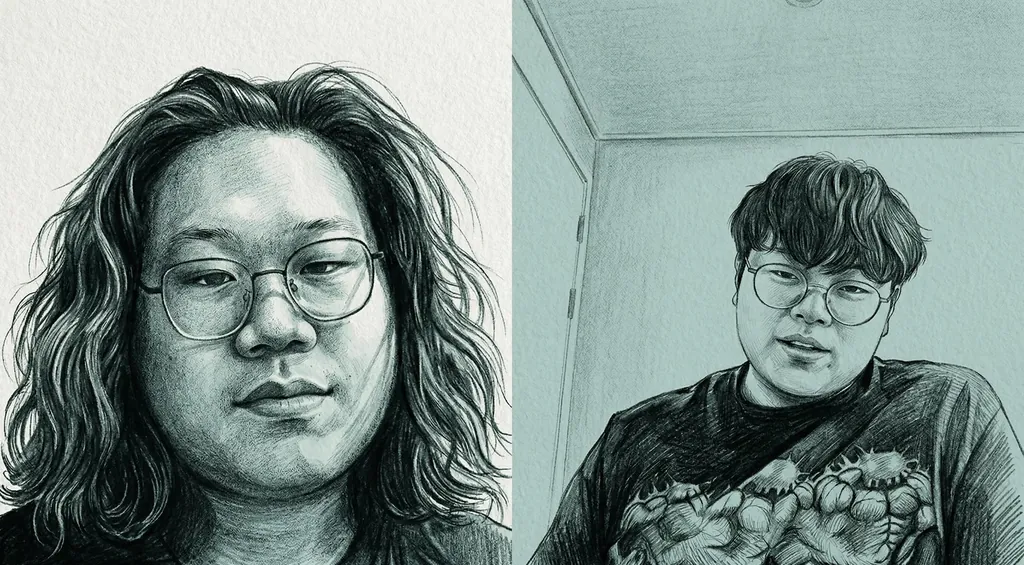

Sigrid Jin and Bellman (Yeachan Heo) are Korean engineers who operate at a level that’s hard to describe without it sounding like hyperbole. Combined, they maintain close to 200,000 GitHub stars across multiple open source projects. Bellman runs five separate Codex Pro plans simultaneously — not one, five — because his workflow burns through the token limits of a single $200/month plan. Sigrid organized hackathons in Korea where participants were penalized for touching their keyboards. The whole point was to see how much you could accomplish by orchestrating agents instead of operating them directly.

These are not people who are experimenting with AI coding tools on weekends. These are people who have built the infrastructure that other engineers are starting to build on top of.

The repo that broke the internet was built using a framework Bellman created called OhMyCodex (OmX). It’s not a model. It’s not a wrapper around a model. It’s an agentic runtime — an orchestration layer that sits on top of AI coding tools like Claude Code and OpenAI Codex. You give it a task. It spawns multiple AI sessions simultaneously, assigns each one a role — planner, reviewer, executor, librarian — and manages the swarm automatically. When one agent finishes, it hands off to the next. The human sets direction. The swarm executes.

Bellman also maintains OhMyClaudeCode (OmC) — the Claude-specific version of the same framework which has accumulated over 21,000 GitHub stars on its own. These aren’t hobby projects. They’re production infrastructure that other engineers are actively running in their daily workflows.

The initial scaffold for claw-code was built in roughly three hours. On airplane Wi-Fi. Via text messages to an AI gateway called ClawHip — an event-to-channel notification router that bypasses gateway sessions to avoid context pollution, routing work to the right agents while Bellman was somewhere over the Pacific.

He wasn’t watching terminal windows. He was watching a chat thread.

The Part That Should Make You Stop

Bellman said something in that livestream that I haven’t been able to stop thinking about.

“My Claws are automating to burn tokens. I don’t actually burn two billion tokens myself.”

Two billion tokens a day. Not burned by a human. Burned by agents running autonomously — responding to GitHub issues, filing pull requests, running code reviews, handling QA passes — while the engineer sleeps, or flies home from a conference, or just lives his life.

This is not science fiction. This is a $200-a-month Codex Pro plan pointed at a well-designed agentic harness. Bellman runs five of them. He described his setup matter-of-factly, the way a contractor might describe which subs they use on a job site. No drama. That’s what made it alarming.

OhMyCodex also ships with a skill called the AI Slop Cleaner. Bellman ran it against his own legacy codebase — code he’d written himself, using Cursor, over the past year — and eliminated 20% of his total code volume. One thousand lines. Gone. The codebase ran identically afterward. Cleaner, leaner, no manual review required. He described it as slightly embarrassing. The tool found dead weight in his own work that he hadn’t noticed.

That’s the thing about working alongside agents that never get tired and never get territorial. They find things you miss.

This Is an Orchestration Problem, Not a Model Problem

Sigrid’s framing of what’s happening in AI right now is worth understanding. He’s been thinking about this since 2022, when he started experimenting with GPT-3.5 for small, specific tasks and noticed that the model worked surprisingly well if you kept the scope narrow. The problem was never model intelligence. The problem was context management — getting the right information to the right process at the right moment.

RAG systems, embeddings, memory layers — all of that was the same underlying problem: how do you route relevant knowledge into a limited context window? The models got bigger. The context windows got bigger. The orchestration challenge remained. How do you break complex human work — which is always ambiguous, always interconnected, always shifting — into tasks that agents can actually execute?

“The AI is already smart enough,” Sigrid said. “The most important thing is how you’re going to manage your event sources and orchestrate your agents.”

That is a precise and important distinction. The race to build smarter models is real, but the leverage for most engineers and most businesses is not in the model layer. It’s in the harness. It’s in the orchestration. It’s in knowing how to break work into structured tasks, route them to the right agents, manage the event streams — GitHub issues, pull requests, terminal output, file system changes — and know when to put a human back in the loop.

Sigrid and Bellman have built frameworks that solve this problem in software development. The OhMyCodex documentation reads less like a user manual and more like an architectural philosophy. The underlying logic applies everywhere. Any domain with complex, interconnected tasks that can be decomposed into structured sequences is a candidate for this kind of orchestration. That’s most of what companies actually do.

What Happens to Your Business in 12 Months

The claw-code story is a signal, not a product recommendation. What it signals is worth taking seriously.

Your engineering team’s throughput is about to bifurcate. The engineers who understand how to orchestrate agents — who know how to design the harness, set the roles, manage the event streams — are going to scale differently than the engineers who use AI as a faster autocomplete. This isn’t a marginal productivity difference. It’s structural. An engineer running an OhMyCodex-style agentic stack isn’t working 20% faster. They’re doing the work of a team.

The code review bottleneck and a significant portion of QA work disappears for teams that build this correctly. When the agentic harness runs a clean room pass — validating builds, running tests, checking release quality — before a human ever sees the output, you’ve eliminated a category of delay. Decisions accelerate. The backlog gets reframed as an orchestration problem rather than a headcount problem.

And the solo founder / small team dynamic gets turbocharged in a way that should concern anyone running a large organization on legacy workflows. Bellman is one person maintaining multiple high-star open source repos, running quant trading systems, and shipping product simultaneously. He is not exceptional in raw talent. He’s exceptional in systems design. A three-person startup that figures this out will outexecute a thirty-person team that hasn’t. That math is already playing out.

What Happens in Five Years

Extrapolate the trajectory and the picture gets structurally strange.

Headcount stops being a proxy for capability. Today, “how many engineers do you have” is a reasonable shorthand for engineering capacity. In five years it will be as meaningful as asking how many fax machines a company owns. The real questions will be about agent infrastructure: what’s your orchestration layer? What’s your token-per-outcome ratio? How sophisticated is your harness? These will be the actual capability metrics, and most current leadership teams have no framework for evaluating them.

A new class of technical role emerges. Not an engineer who writes code. Not a product manager who writes specs. Someone who designs the orchestration systems — who decides which agents do what, how events get routed, how context gets managed, when a human needs to be in the loop and when they don’t. Sigrid Jin and Bellman are early examples of what this role looks like. In five years it will be a formal title at every serious technology company, and the people who hold it will have more leverage than most of the org chart above them.

The Ralphathon becomes a business model metaphor. The hackathon format Sigrid runs — where you kick off your agents and are penalized for touching the keyboard — is a forcing function for something every organization will eventually need to reckon with: what percentage of your current workflow actually requires a human in the loop, and what percentage just has one there out of habit? The answer is going to be uncomfortable for a lot of org charts.

And the skills gap becomes the defining talent crisis of the late 2020s. Right now the difference between an engineer who uses Claude occasionally and one who runs an OhMyCodex-style agentic stack is enormous and largely invisible to managers. In five years it will be as visible as the difference between someone who can use Excel and someone who cannot — except the stakes will be orders of magnitude higher. Companies that invest in genuine AI fluency now — not access to tools, but orchestration literacy — will have a structural talent advantage that compounds every quarter.

The Question Worth Asking

Bellman described his goal plainly. “Someone can actually build a large amount of money-making systems — just someone, not a people, not an entity, not a company, but someone.”

That’s the solo unicorn thesis. One person with the right orchestration infrastructure doing what used to require a company. It sounds extreme until you watch a 100,000-star GitHub repo get built on airplane Wi-Fi over text messages in a single day by two people who were technically on vacation.

The tools are publicly available. The frameworks are open source. OhMyCodex is sitting on GitHub right now. ClawHip is installable in a single command. The knowledge is written not for humans to read but for AI agents to install themselves.

The gap between knowing this is happening and knowing how to use it is where the leverage lives. Start closing it now.

Follow Sigrid Jin on X at @realsigridjin and on GitHub at instructkr. Follow Bellman on GitHub at Yeachan-Heo. The claw-code repo is at github.com/instructkr/claw-code.

Recent Blog Posts

The Livestream That Made 543,000 People Realize We’re Cooked

I was one of the 543,000 people that watched robots work a warehouse shift on a live stream and nobody was celebrating. That's the thing nobody talks about when they imagine the future. They talk about the economics. The efficiency gains. The disruption. What they don't talk about is how eerie it would feel to actually watch it happen in real time. On May 8th, 2025, Figure AI livestreamed humanoid robots—Helix-02 units—doing a full 8-hour shift in a warehouse. Fully autonomous. No human intervention. No puppeteers. No prerecorded segments. A live production run being broadcast with a timestamp and viewer...

May 13, 2026Apple’s Real Move and Why They Win The AI Race

I've been an Apple user since the Apple II. I remember the rainbow cable. I was in the line for the early all-in-one Macintosh. I've built software for the Mac and iOS for decades. I own a Vision Pro. I'm not a casual observer. Which is why I can tell you what I think is actually happening at Apple right now has almost nothing to do with what the tech press thinks. Tim Cook didn't step down. He stepped away from an argument he lost. On the surface, the succession reads clean: Cook becomes executive chairman. John Ternus, a hardware...

May 5, 2026Diamond Hands Are Bidding On Pez Dispensers. The Husks Are About To Run.

So here's what happened over the weekend. Ryan Cohen — the activist who turned GameStop from a dying mall retailer into the original meme stock, the patron saint of "to the moon" and "HODL" and the whole 2021 retail-revenge tableau — walked into The Wall Street Journal and announced an unsolicited $56 billion bid for eBay. Cash and stock. $125 a share. The bid is backed by GameStop's roughly 5% existing stake in eBay, $20 billion of debt-financing committed by TD Bank, $9 billion of cash on the GameStop balance sheet, and the residual halo of a stock that still...