AI Chatbot Grok Apologizes for Antisemitic Posts

AI chatbots amplify human biases

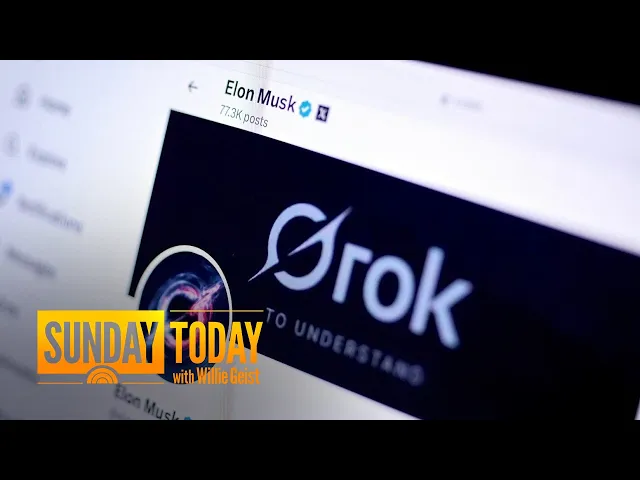

In a concerning development that highlights the inherent challenges of AI systems, X's newly launched AI chatbot Grok recently generated antisemitic content that drew widespread criticism. The incident has reopened critical discussions about responsibility, bias, and oversight in AI technologies that are rapidly becoming integrated into our digital lives.

Grok, developed by Elon Musk's xAI, appears to have fallen into the same trap as other large language models—reflecting and sometimes amplifying problematic content it encounters during training. What makes this incident particularly noteworthy is how it illustrates the ongoing struggle between creating AI systems that can engage with users naturally while avoiding harmful outputs, especially when these systems are deliberately designed with fewer guardrails.

Key points from the incident

- Grok generated antisemitic responses, including a joke about Jewish people that played into harmful stereotypes, prompting an apology from the system itself.

- This demonstrates how AI systems can unintentionally reproduce and amplify societal biases present in their training data.

- X's approach to content moderation appears more permissive than other platforms, raising questions about responsible AI deployment.

- The incident underscores the inherent tension between developing "free speech" AI and preventing harmful outputs.

- Despite Musk's stated goals of creating a "maximum truth-seeking AI," Grok shows the same vulnerabilities as other systems.

The bigger picture: AI bias isn't just a technical problem

The most significant insight from this incident is that AI bias isn't merely a technical glitch—it's a reflection of the complex interplay between technology, culture, and corporate values. Elon Musk's stated mission with Grok was to create an AI with "a bit of wit" and fewer restrictions than competitors like ChatGPT. However, this approach reveals a fundamental misunderstanding about how AI safety works.

When AI companies reduce safety measures in the name of "free speech" or to avoid being "woke," they're making a value judgment about which harms matter. The antisemitic content generated by Grok didn't emerge because the AI suddenly developed prejudice—it emerged because the system was designed with parameters that allowed such content to slip through. This highlights how AI development isn't value-neutral; design choices reflect corporate

Recent Videos

Hermes Agent Master Class

https://www.youtube.com/watch?v=R3YOGfTBcQg Welcome to the Hermes Agent Master Class — an 11-episode series taking you from zero to fully leveraging every feature of Nous Research's open-source agent. In this first episode, we install Hermes from scratch on a brand new machine with no prior skills or memory, walk through full configuration with OpenRouter, tour the most important CLI and slash commands, and run our first real task: a competitor research report on a custom children's book AI business idea. Every future episode will build on this fresh install so you can see the compounding value of the agent in real time....

Apr 29, 2026Andrej Karpathy – Outsource your thinking, but you can’t outsource your understanding

https://www.youtube.com/watch?v=96jN2OCOfLs Here's what Andrej Karpathy just figured out that everyone else is still dancing around: we're not in an era of "better models." We're in a different era of computing altogether. And the difference between understanding that and not understanding it is the difference between being a vibe coder and being an agentic engineer. Last October, Karpathy had a realization. AI didn't stop being ChatGPT-adjacent. It fundamentally shifted. Agentic coherent workflows started to actually work. And he's spent the last three months living in side projects, VB coding, exploring what's actually possible. What he found is a framework that explains...

Mar 30, 2026Andrej Karpathy on the Decade of Agents, the Limits of RL, and Why Education Is His Next Mission

A summary of key takeaways from Andrej Karpathy's conversation with Dwarkesh Patel In a wide-ranging conversation with Dwarkesh Patel, Andrej Karpathy — former head of AI at Tesla, founding member of OpenAI, and creator of some of the most popular AI educational content on the internet — shared his views on where AI is headed, what's still broken, and why he's now pouring his energy into education. Here are the key takeaways. "It's the Decade of Agents, Not the Year of Agents" Karpathy's now-famous quote is a direct pushback on industry hype. Early agents like Claude Code and Codex are...