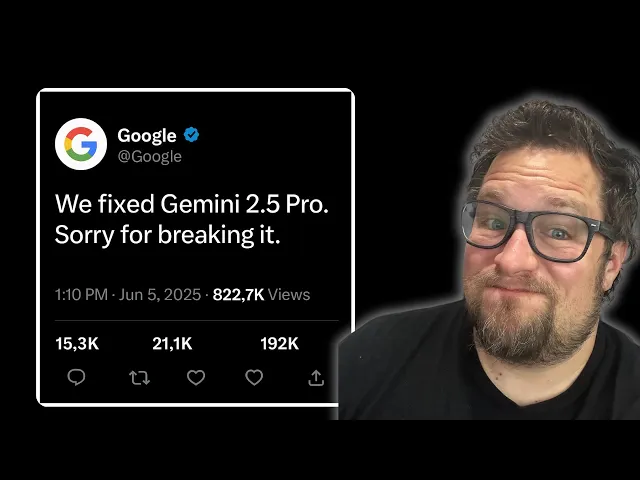

Did Google Fix Gemini 2.5 Pro?

Gemini 2.5 Pro: real progress or clever marketing?

Google's latest AI model Gemini 2.5 Pro arrives with considerable fanfare and impressive demos, but how much of the hype represents genuine advancement? The new model showcases remarkable multimodal capabilities with claims of handling longer contexts and exhibiting improved reasoning – but the real question is whether these improvements address the fundamental issues that plagued earlier versions or simply represent incremental enhancements wrapped in clever marketing.

Key elements of the Gemini 2.5 Pro update

-

Context length expanded to 2 million tokens, theoretically enabling the model to process entire books, lengthy videos, or massive code repositories at once – a significant leap beyond previous limitations

-

Multimodal reasoning improved, allowing the system to more effectively process and interpret combinations of text, images, audio and video inputs in a more integrated manner

-

Reasoning capabilities enhanced through both architectural changes and training approaches, with Google claiming more consistent responses and fewer hallucinations

The most striking aspect of Gemini 2.5 Pro isn't any single technical feature but rather Google's evolving approach to AI development. The company appears to be shifting from chasing raw performance metrics toward addressing more nuanced user experience issues. This evolution reflects broader industry recognition that benchmark scores alone don't translate to real-world utility – a mature perspective that acknowledges AI systems must be evaluated on their practical reliability rather than their ability to perform impressive but carefully curated demos.

This maturation comes at a critical time for Google. As competition in the AI space intensifies with rivals like OpenAI's GPT-4o and Anthropic's Claude models advancing rapidly, Google faces pressure to demonstrate that its considerable AI investments are yielding tangible results. The company's strategy appears to focus on making AI more accessible and useful rather than simply more powerful – a distinction that matters tremendously for enterprise adoption where consistency often trumps occasional brilliance.

What's particularly noteworthy is how Gemini 2.5 Pro reflects Google's attempt to reconcile two competing priorities: pushing technical boundaries while addressing fundamental usability issues. Early versions of Gemini suffered from problems ranging from political controversies to basic factual inaccuracies. The new version attempts to maintain creative capabilities while implementing guardrails against problematic outputs – a bal

Recent Videos

Hermes Agent Master Class

https://www.youtube.com/watch?v=R3YOGfTBcQg Welcome to the Hermes Agent Master Class — an 11-episode series taking you from zero to fully leveraging every feature of Nous Research's open-source agent. In this first episode, we install Hermes from scratch on a brand new machine with no prior skills or memory, walk through full configuration with OpenRouter, tour the most important CLI and slash commands, and run our first real task: a competitor research report on a custom children's book AI business idea. Every future episode will build on this fresh install so you can see the compounding value of the agent in real time....

Apr 29, 2026Andrej Karpathy – Outsource your thinking, but you can’t outsource your understanding

https://www.youtube.com/watch?v=96jN2OCOfLs Here's what Andrej Karpathy just figured out that everyone else is still dancing around: we're not in an era of "better models." We're in a different era of computing altogether. And the difference between understanding that and not understanding it is the difference between being a vibe coder and being an agentic engineer. Last October, Karpathy had a realization. AI didn't stop being ChatGPT-adjacent. It fundamentally shifted. Agentic coherent workflows started to actually work. And he's spent the last three months living in side projects, VB coding, exploring what's actually possible. What he found is a framework that explains...

Mar 30, 2026Andrej Karpathy on the Decade of Agents, the Limits of RL, and Why Education Is His Next Mission

A summary of key takeaways from Andrej Karpathy's conversation with Dwarkesh Patel In a wide-ranging conversation with Dwarkesh Patel, Andrej Karpathy — former head of AI at Tesla, founding member of OpenAI, and creator of some of the most popular AI educational content on the internet — shared his views on where AI is headed, what's still broken, and why he's now pouring his energy into education. Here are the key takeaways. "It's the Decade of Agents, Not the Year of Agents" Karpathy's now-famous quote is a direct pushback on industry hype. Early agents like Claude Code and Codex are...